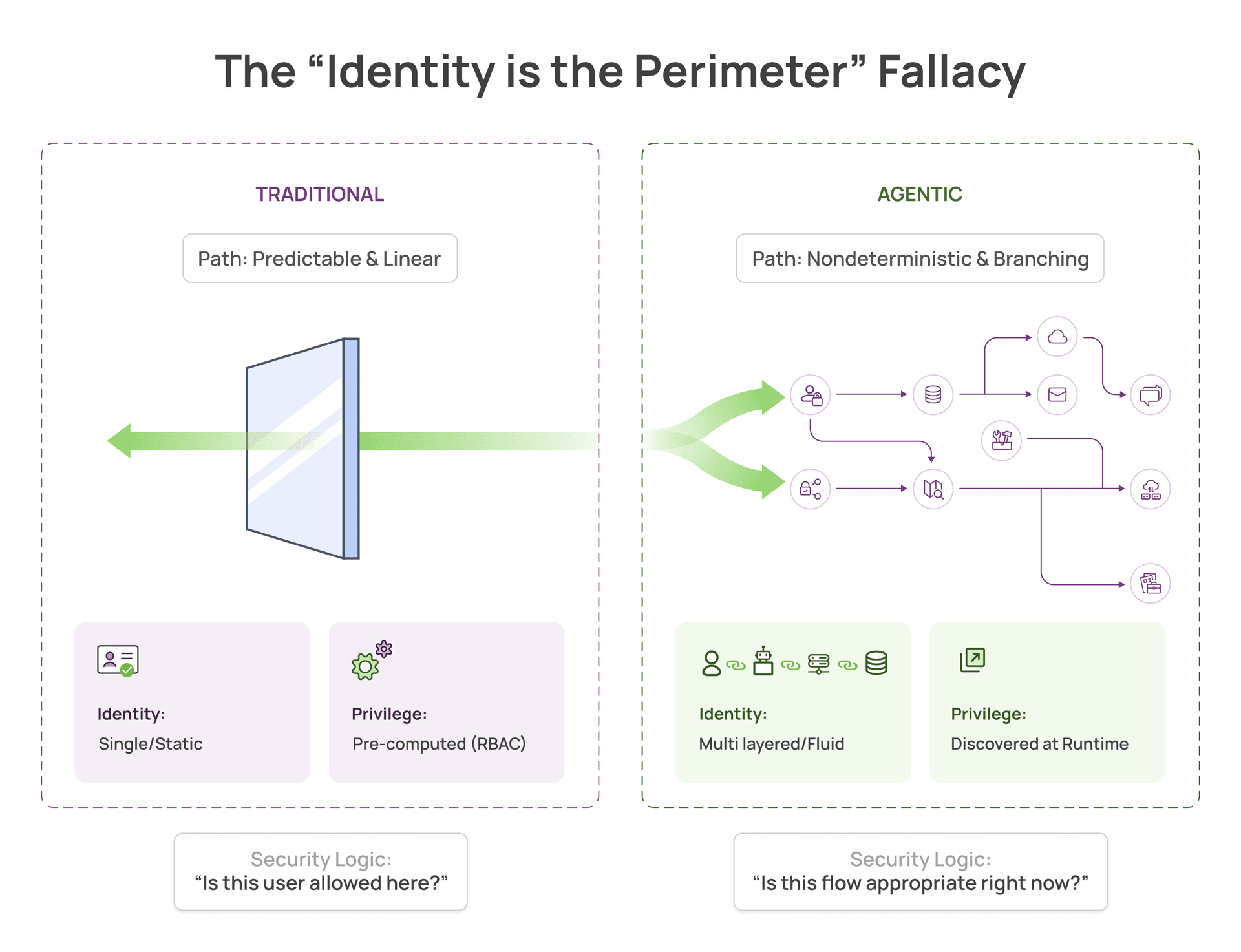

Why “identity is the perimeter” breaks for agents, and what runtime context must capture to secure them.

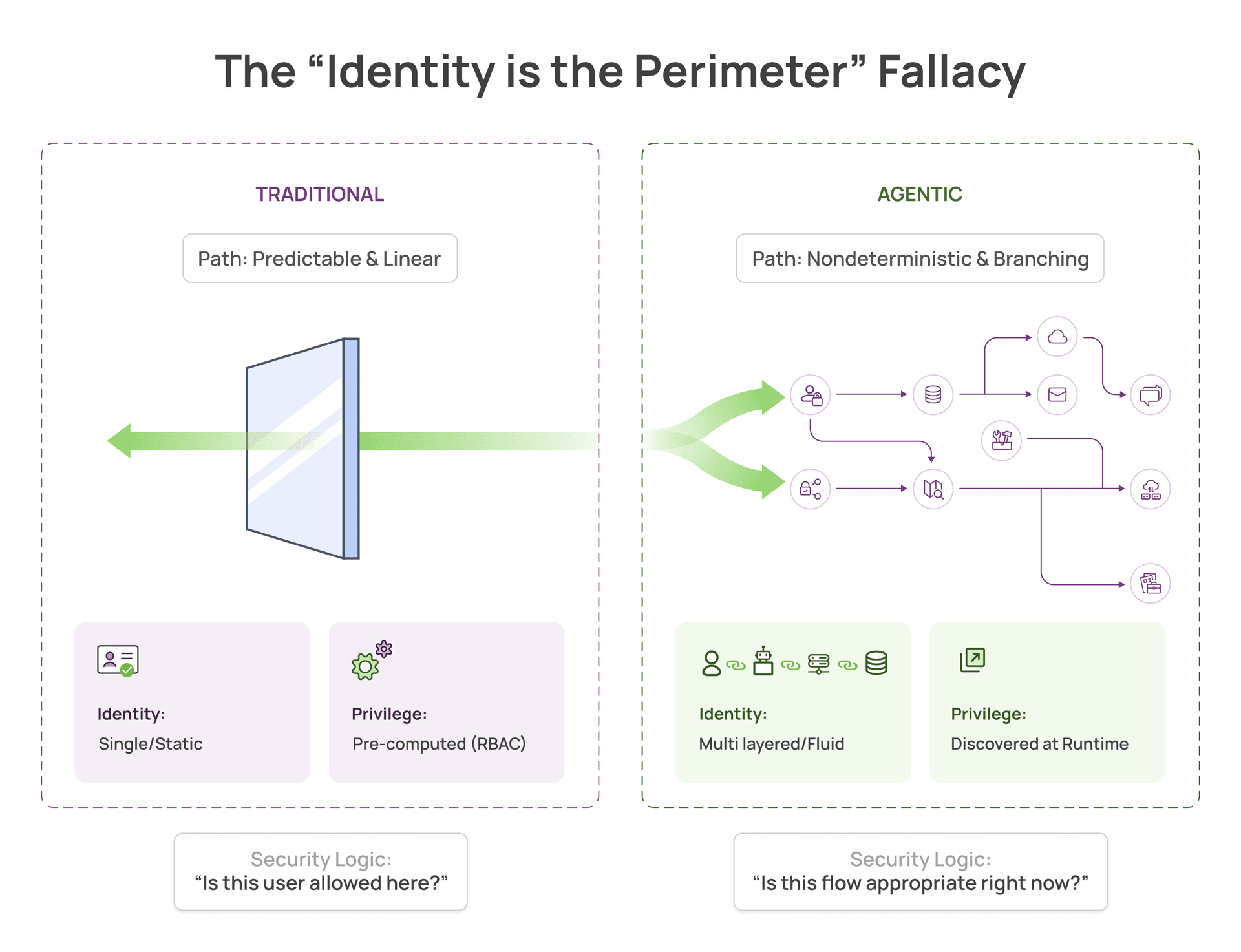

The “identity is the perimeter” narrative is everywhere in agentic security.

Identity is definitely becoming more critical as agentic adoption accelerates, but the perimeter framing is incomplete. It assumes AI behaves like a predictable session. It does not.

Agents need broad permissions to work autonomously. They integrate with Workday, query databases, access internal tools, and make decisions across multiple data sources. Traditional security validates identity at the gate, but it has limited visibility into what agents actually do with those permissions.

Once an agent has access, IAM cannot answer the most important questions: what data did it touch, and where did it send it?

The real problem: agents need broad access to be useful

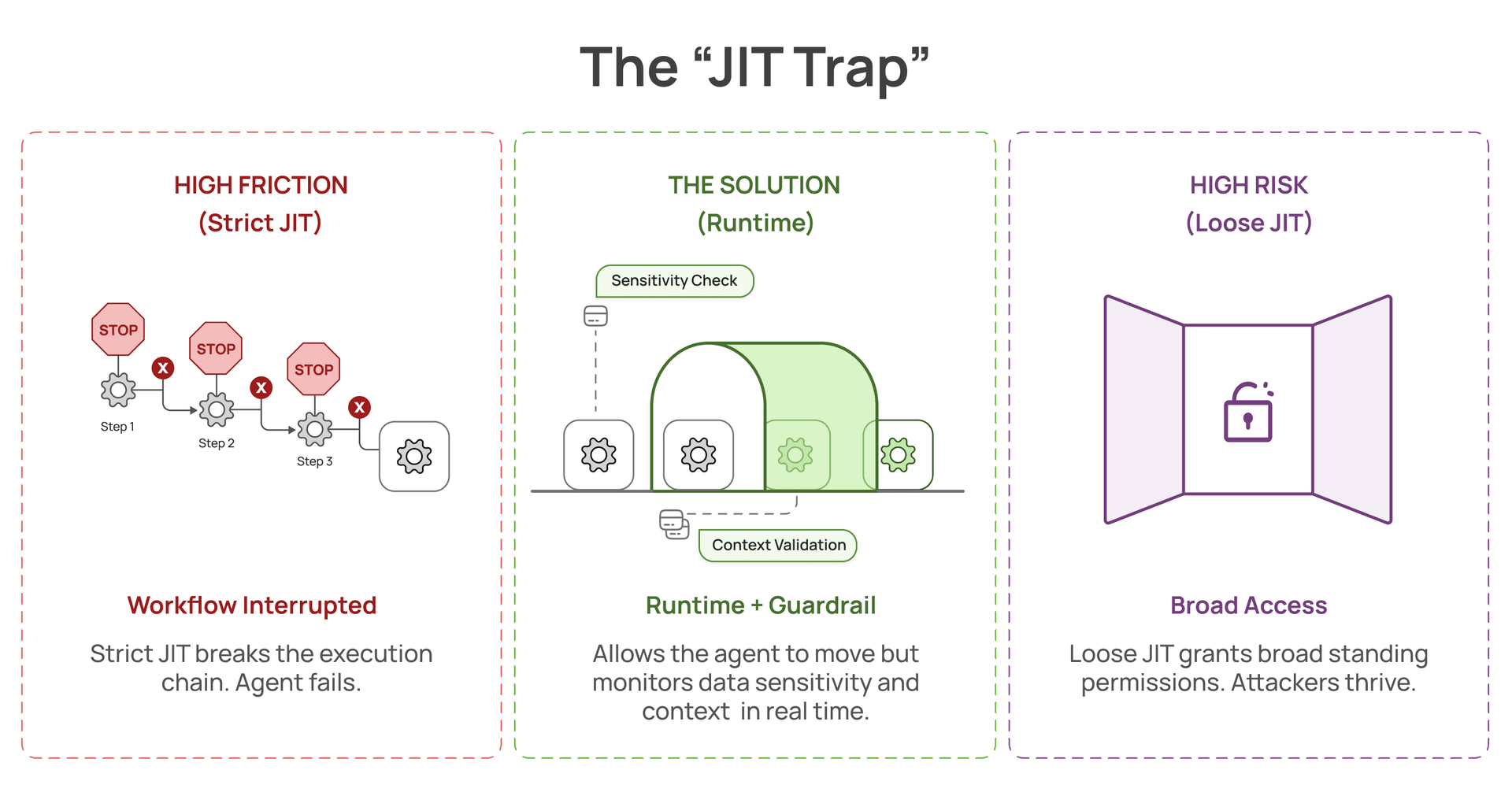

PAM and JIT were built for predictable, human-shaped access patterns. Request access, get approved, perform a known task, revoke access.

Agents do not work this way.

An agent answering “Give me insights on team performance” might:

- query org structure

- pull compensation data

- join with performance reviews

- access internal notes

- synthesize across all sources to generate an answer

You cannot predict this access path upfront. The agent discovers what it needs as it reasons through the problem. If you lock down permissions with strict JIT, the agent cannot function. If you grant broad standing permissions so it can work, you lose the security boundary.

This is not a failure of identity management. This is the inherent tension of autonomous systems.

What traditional controls can prove, and what they cannot

What traditional controls can prove, and what they cannot:

- PAM/JIT: “Did we approve this identity to access this resource?”

- IAM: “Service account authenticated to database”

- CASB and DLP: can see calls and content, but often struggle to attribute intent and to reason over multi step agency

What they miss is the execution chain.

User prompt: “Give me team performance insights”

↓

Agent reasoning: [calls Workday API and retrieves 500 employee records]

↓

Agent reasoning: [queries salary database and accesses compensation data]

↓

Agent reasoning: [joins with performance reviews and combines sensitive datasets]

↓

Agent response: “Here’s the summary”

From an identity perspective, every step was authorized.

Authorization is not the same as appropriateness.

From a runtime perspective, the agent accessed more data than necessary and combined sensitive information in ways that violate governance policies.

Traditional security sees: “Agent accessed Workday, agent queried employee DB”

Runtime visibility sees: “Agent pulled 500 salary records, joined with performance data, exposed compensation information the requester should not have seen”

A real example: the Glean incident

A CTO at a public company told me their workplace assistant had an HRIS integration with the permissions needed to answer org and people questions.

A user asked an innocuous question about their team.

The assistant pulled compensation fields too broadly and exposed salary information to the requester. The permissions were valid. The access was authorized. But the behavior violated governance expectations.

They only discovered this when someone noticed the exposed data. That is the governance gap: you only learn after exposure.

There was no runtime visibility into what the assistant was actually doing with HRIS permissions. No alert that sensitive salary fields were accessed at scale. No policy enforcement tied to the data flow between the HRIS connector and the user session.

The question security teams are asking is simple :

What else is happening that we do not know about?

The identity chain problem in practice

When people say “the agent’s identity,” they usually mean one thing. In real systems, multiple identities show up across a single user request.

- User to agent (delegation)

The agent acts on behalf of the user via OAuth or session inheritance. - Agent to service (workload identity)

The agent uses a service identity to access backends (IAM roles, service accounts, API keys). - Agent to tool server (translation boundary)

The agent calls a tool server, and the tool server executes the request using its own service identity.

This is where context gets lost. IAM logs show a legitimate service principal, but the initiating user, original prompt, and intermediate agent steps are not reliably attached.

Even with allowlisted tools, prompt injection and indirect prompt contamination can drive confused deputy behavior. The tool server faithfully executes a harmful request under a valid identity, and authorization logs stay clean.

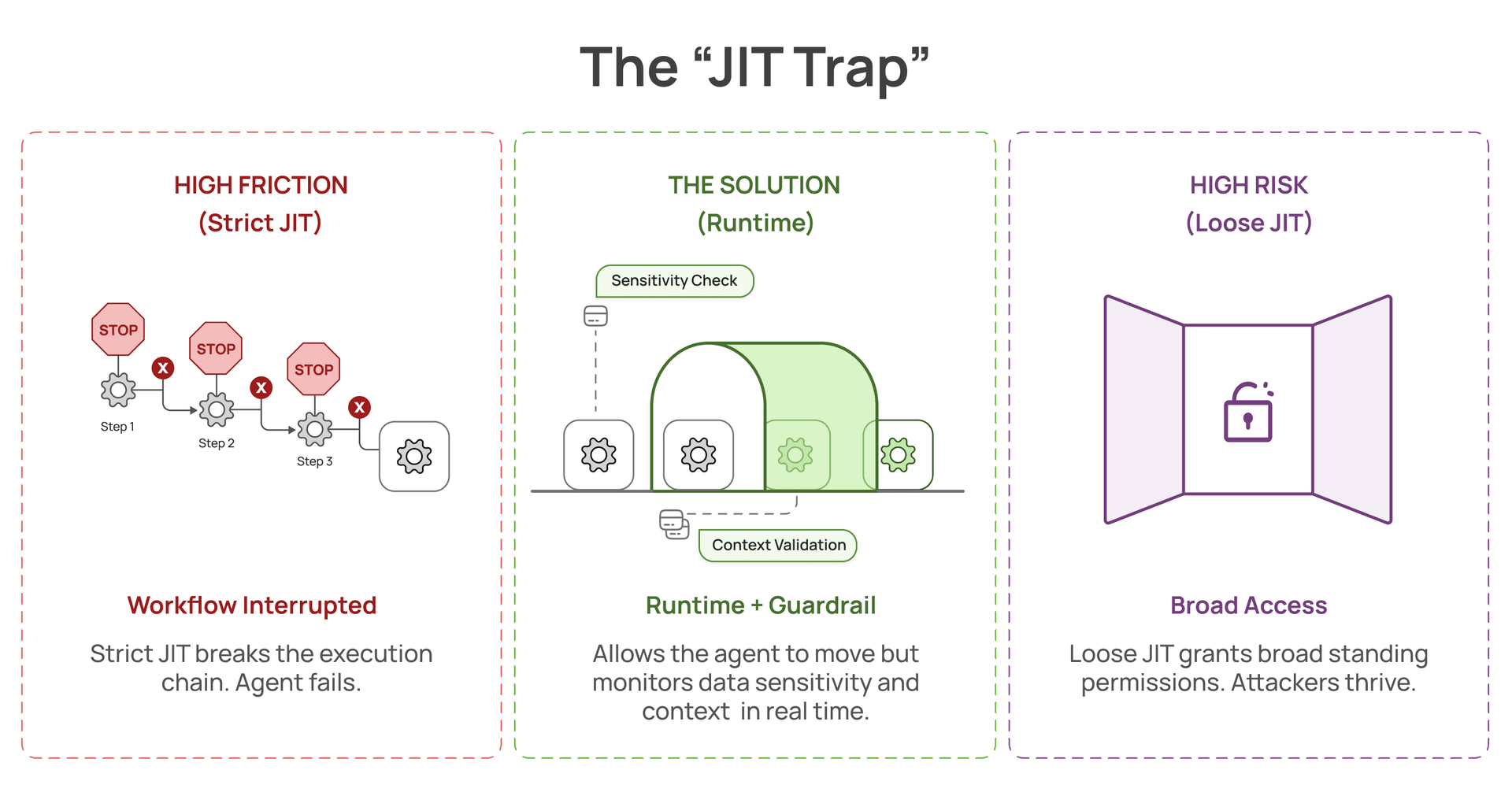

Why PAM and JIT fail for agents

PAM and JIT were built for predictable workflows. Approve access, perform task, revoke access.

Agents are nondeterministic. The same prompt can trigger different execution paths each time:

Run 1: queries HRIS, accesses 50 records

Run 2: same prompt, accesses 500 records including compensation fields

Run 3: same prompt, joins HRIS plus performance data and exports results

You cannot precompute least privilege for a workflow that changes every run.

- Make JIT strict and you interrupt execution mid-flight. Usability collapses. Teams bypass controls.

- Loosen JIT and you grant broad standing permissions. You are back to the original problem.

Either way, you have either added friction or increased blast radius.

This is also why traditional CASB and DLP need to evolve:

- They inspect traffic, not agency.

- They enforce static policies, not contextual ones.

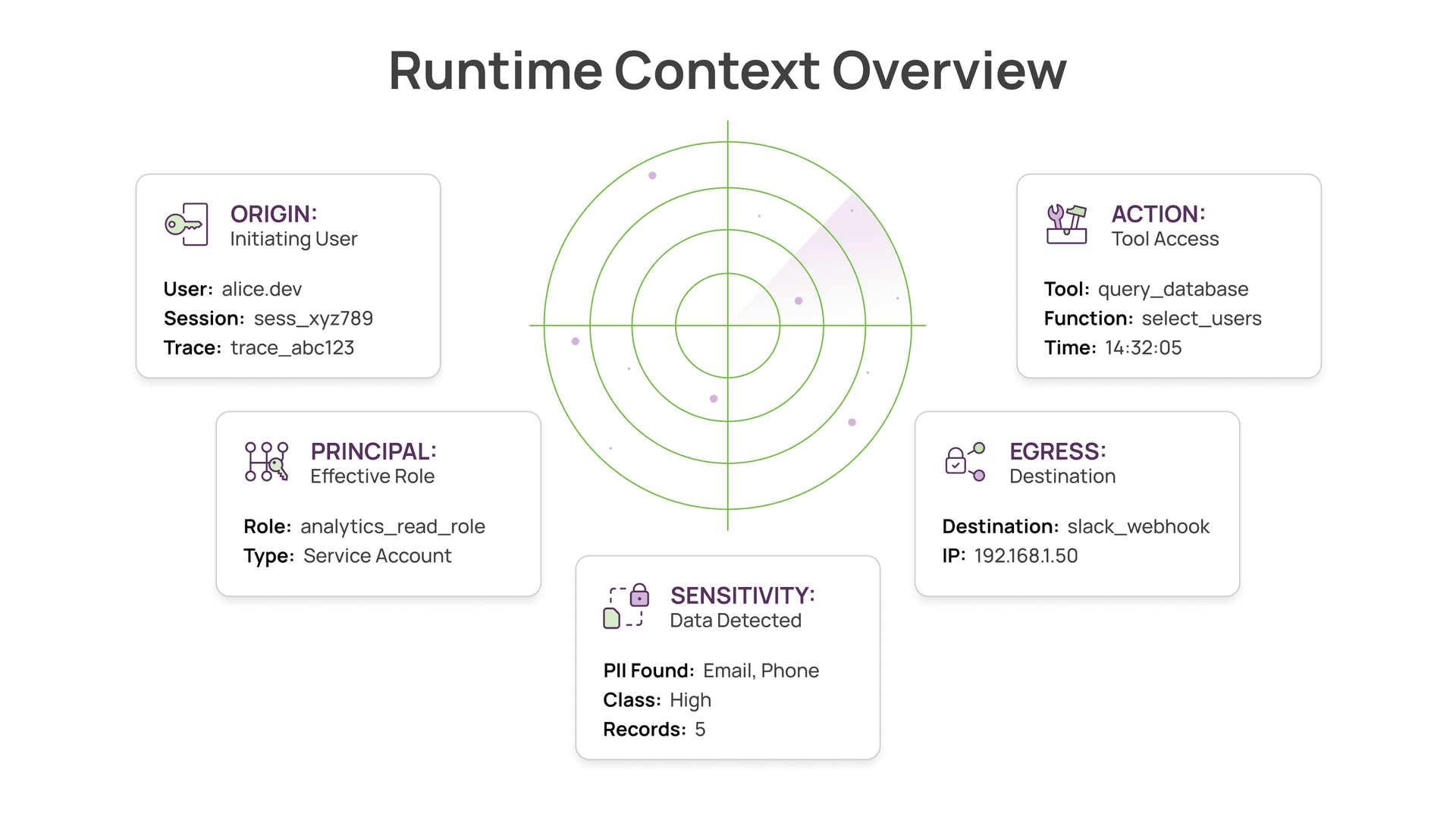

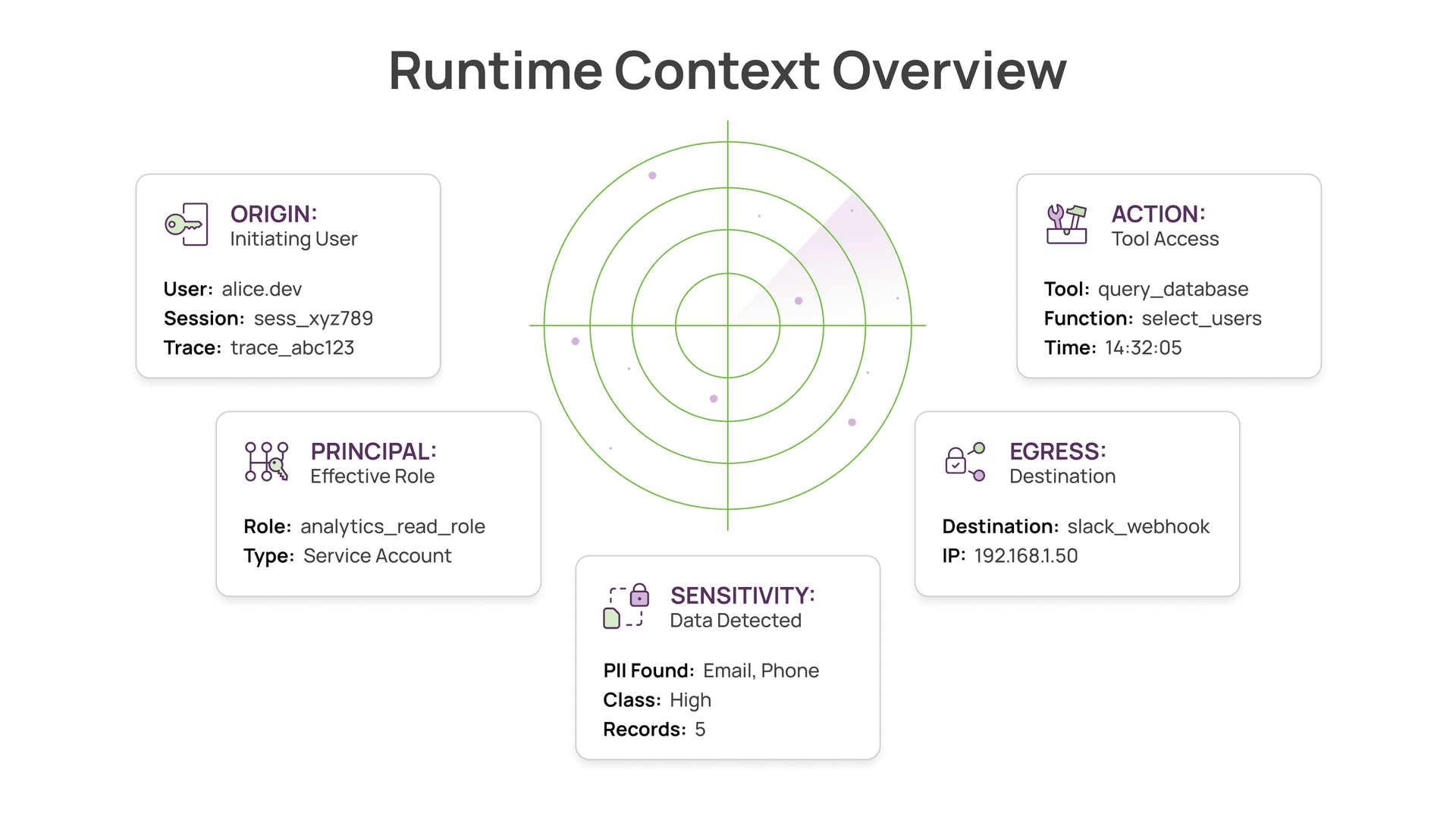

What you actually need: runtime visibility

Security for agents is not “more identity controls.” It is visibility into what agents do with the permissions you grant them.

For every action in the execution chain, capture:

- Action: tool invoked, API called, query executed

- Principal: which role, service account, or token was used

- Data accessed: sensitivity class, fields, volume

- Destination: user session, temp file, external API, webhook

- Attribution: initiating user, original prompt, agent session or trace ID

Then enforce policies based on behavior:

- alert if salary fields are accessed at scale in a single session

- block joining sensitive HRIS fields with outbound destinations

- flag large sensitive exports to temp files or webhook-like sinks

These policies cannot be enforced at the identity layer. They require runtime visibility.

Takeaway

Identity matters in the agentic era, but “identity is the perimeter” is incomplete.

Agents need broad permissions to be useful. The risk is not unauthorized access. It is authorized access used in unauthorized ways.

The organizations getting this right are not adding more identity controls. They are building runtime visibility into agent behavior and data access patterns, so they can answer:

- what sensitive data did the agent touch

- did it access more than needed

- where did the data go

- did the behavior match governance policy

This requires runtime telemetry, data access monitoring, and contextual policy enforcement. The framing needs to shift from “lock down identities” to “see and govern what agents actually do.”

Disclaimer: I’m CEO of Aurva, an AI security company focused on runtime visibility for agentic systems. These views are based on my experience building AI systems at Meta and working with security teams deploying agents in production.